As you know, Salty fights for digital visibility for women, trans and non binary people everyday – and is working tirelessly to ensure our stories are not erased from the internet.

Since 2019, Salty’s Algorithmic Bias Collective, along with the University of Michigan, have been collecting and analyzing the online experiences of those in our community – and now our second groundbreaking report, “Censorship of Marginalized Communities on Instagram” is ready to be shared with you. We know that the tech industry rarely listens to our stories, and our hope is that hard data will help illuminate the injustices facing marginalized people online, and help build a better internet for everyone.

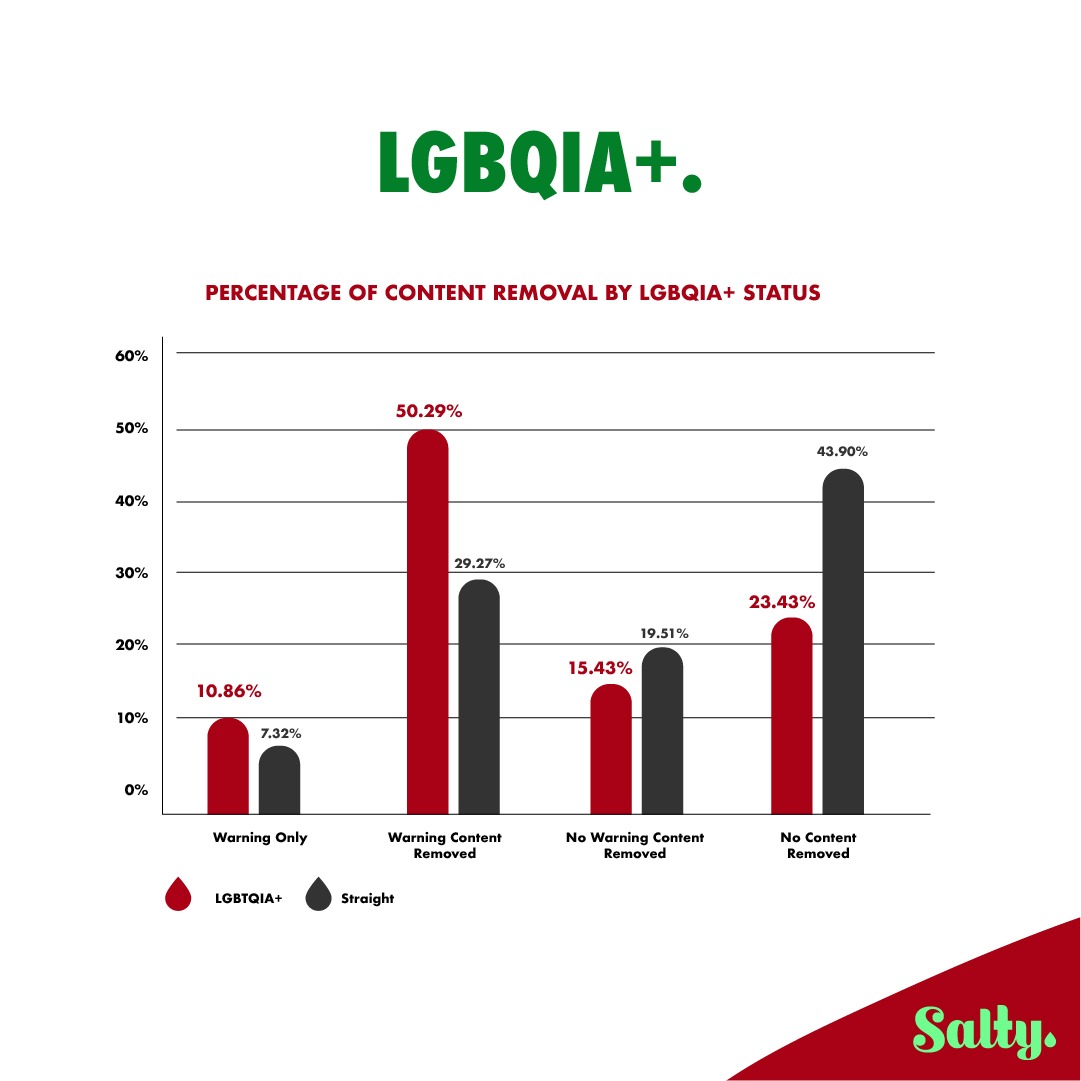

In this survey and report, we found substantial evidence of censorship on Instagram for marginalized groups including people who are transgender and/or nonbinary, LGBQIA, BIPOC, disabled, sex workers, and/or sex educators. Instead of releasing this report through an industry journal, we decided to release it via the Salty channels- this information should be available to everyone.

Below are some excerpts and our main findings. If you’d like to download the report in its entirety, click here. To support the labor that Salty puts towards fighting for visibility online, we ask that you make a $10 contribution to download this document in full.

MAIN FINDINGS

We found substantial evidence of censorship on Instagram for marginalized groups including people who are transgender and/or nonbinary, LGBQIA, BIPOC, disabled, sex workers, and/or sex educators.

Salty Algorithmic Bias Research Collective

Almost all marginalized groups experienced censorship on Instagram at higher percentages than those from more privileged groups.

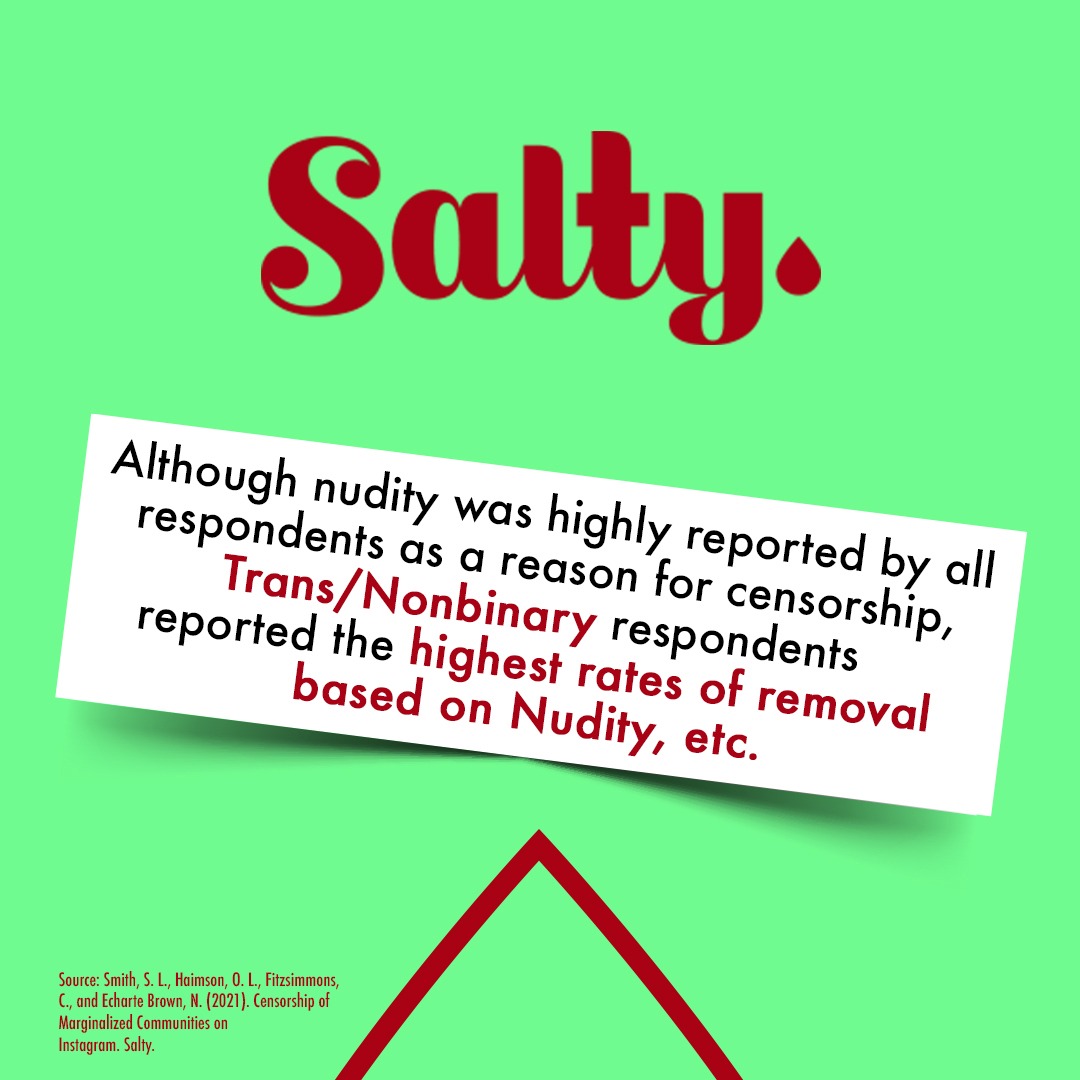

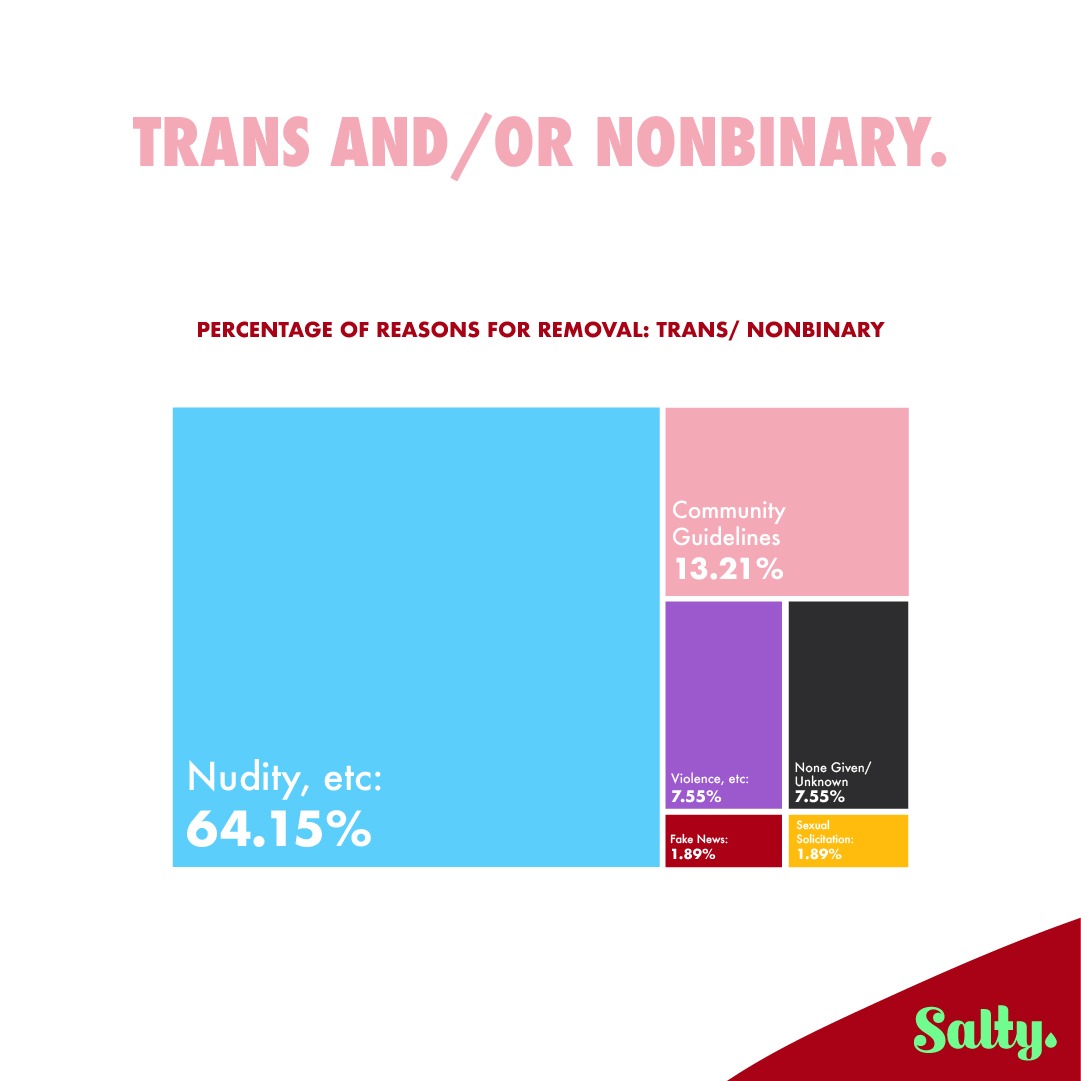

• For most marginalized groups the most prominent reason for content removals was Nudity, etc.

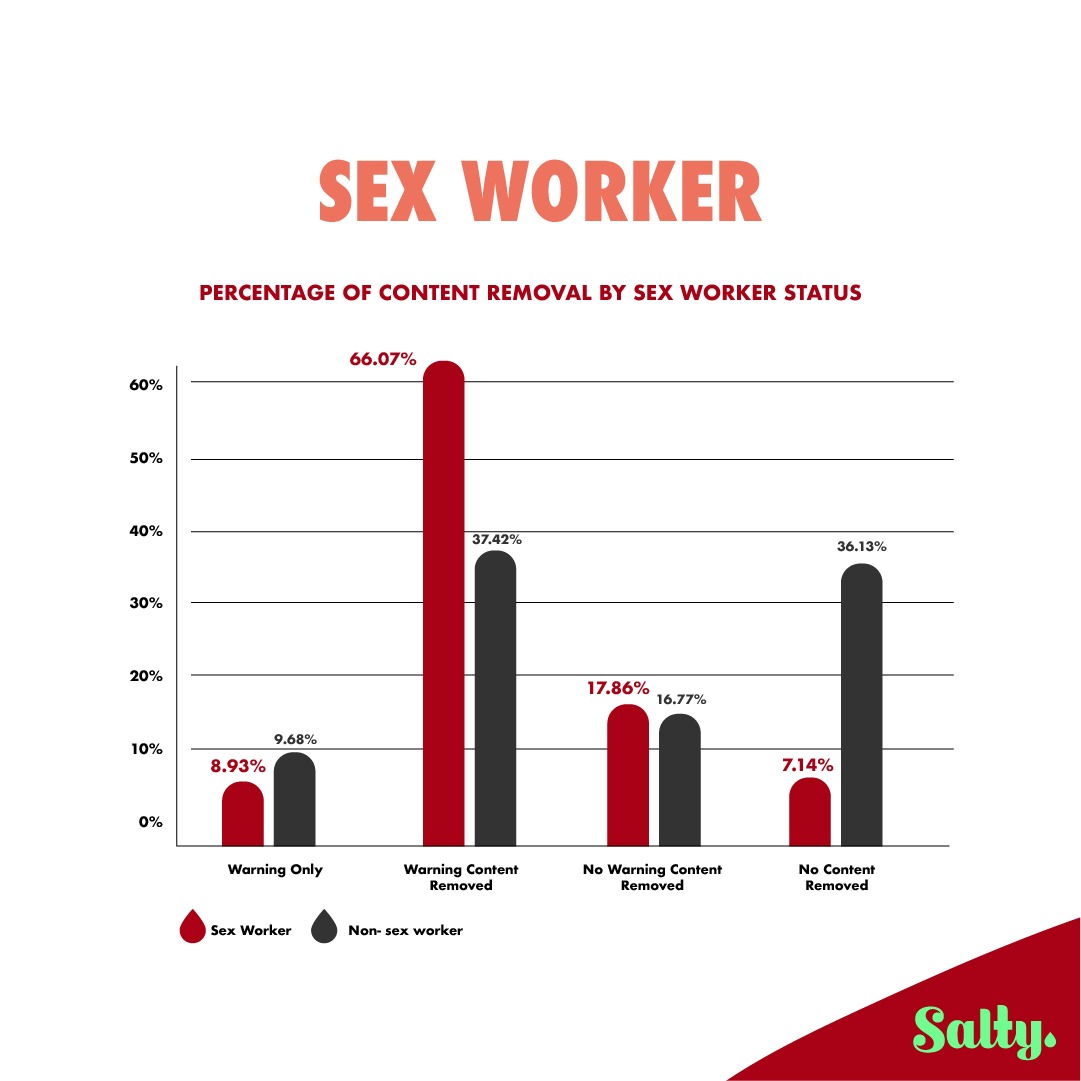

• Compared to other marginalized respondents, Sex Workers are more likely to report being censored in general.

• Disabled people are more likely to be report being censored for Self-injury/Self-harm or Violence,etc compared to other marginalized respondents.

Although nudity was highly reported by all respondents as a reason for censorship, Trans/Nonbinary respondents experienced the highest rates of removal based on Nudity, etc.

• Compared to other marginalized respondents, BIPOC were most likely to report being censored for Fake news/False information.

• Among marginalized respondents, Plus sized respondents report the highest percentage of the blanket violation: “Community Guidelines”.

• Even though most participants who experienced content removals appealed these decisions, over 90% of those who appealed either received no response or their content was not reinstated.

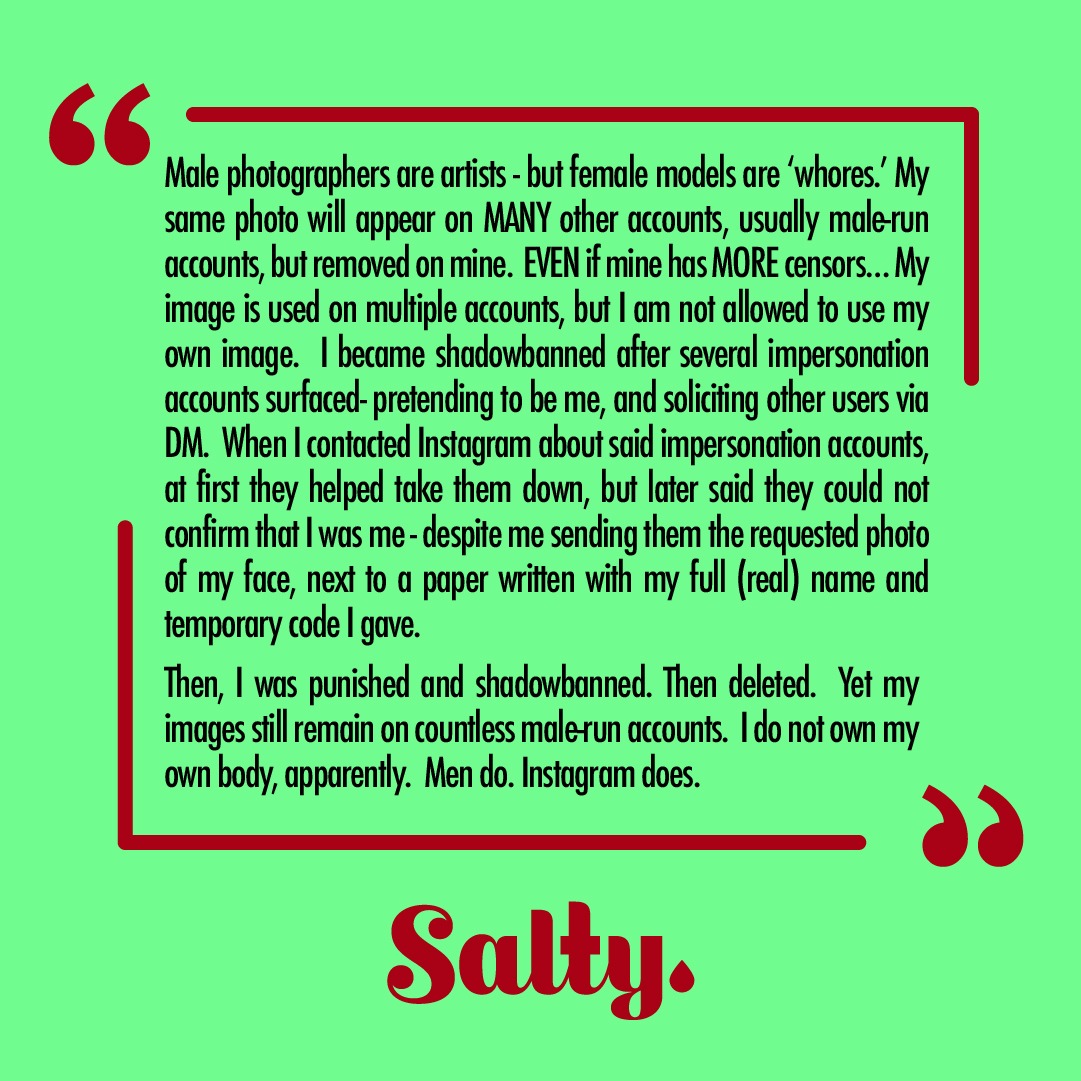

• Narrative quotes from participants highlight the frustration, anger, and disappointment people felt when their content was removed, and the personal and sometimes financial consequences marginalized people face when content removals restrict their ability to express themselves online.

• Our results highlight the problematic and discriminatory ways marginalized peopleʼs bodies and identities are sexualized and policed on social media.

Taken together, our results show how disproportionate content removals cause marginalized groups to face substantial challenges and consequences when attempting to use online spaces like Instagram.

We need your voice.

Please take part in our next survey here:

DOWNLOAD SALTY’S 2ND ALGORITHMIC BIAS REPORT HERE

Algorithmic Bias Report: September 2021 (PDF Download)

This 27 page PDF report is based on information volunteered by Salty’s community and compiled by the Salty Algorithmic Bias Research collective, in collaboration with the University of Michigan.

Since the present survey was distributed to the Salty community, it primarily included people who are marginalized in one or more ways. Thus, the comparisons to privilege groups detailed in this report are likely much different than they would be if we compared participantsʼ experiences to the general population.

It is likely that the difference in proportion of content removals observed in the present report would be even more disparate in a wider, more generalized sample (e.g., as found in Haimson et al. 2021). For this reason, our next survey and report will attempt to survey a wider audience.